Core Tools

Proxy Benchmark

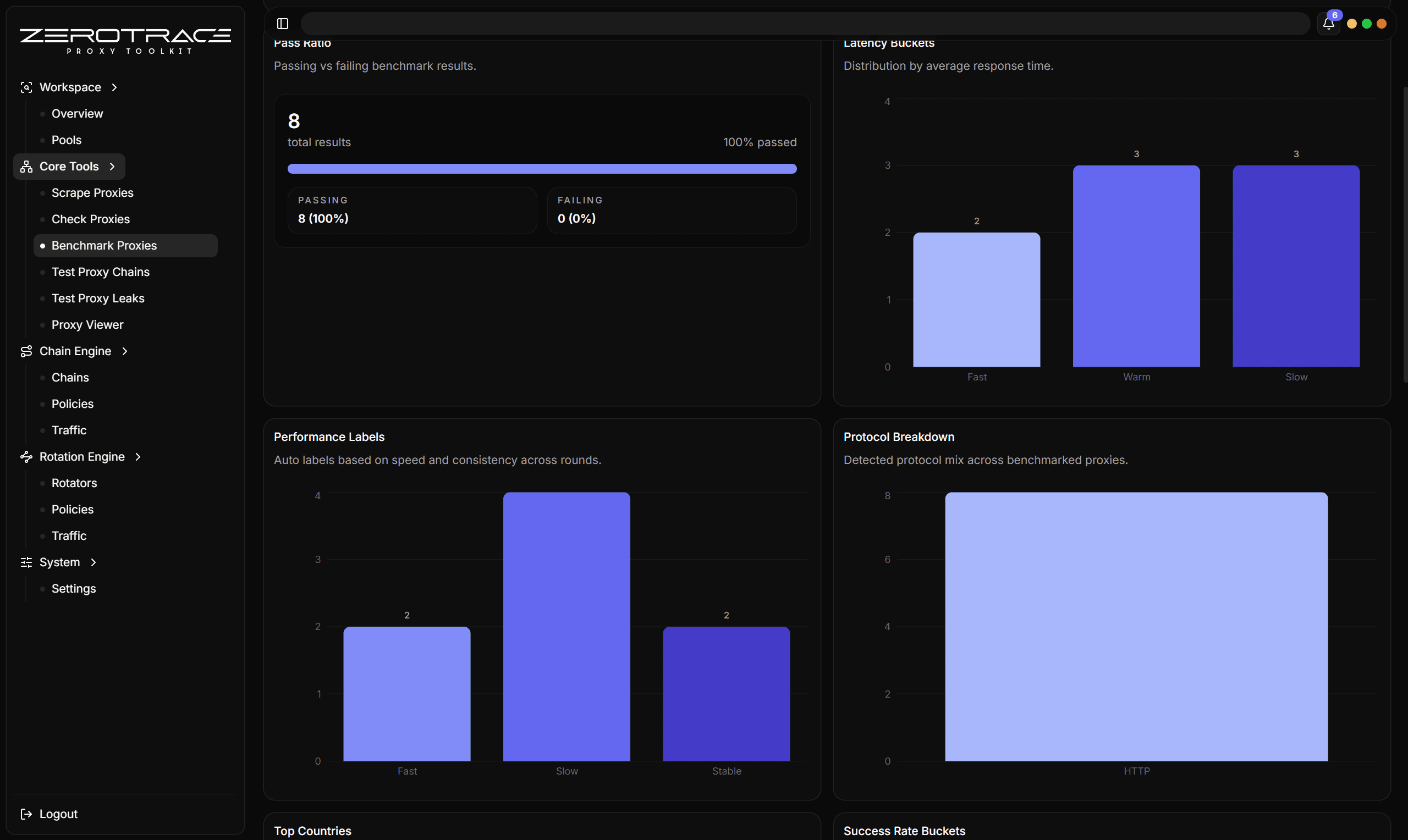

Re-test proxies across multiple rounds to measure stability, latency, and pass rate.

Benchmarking answers a different question than checking. A check asks "does it work now?" A benchmark asks "does it keep working across repeated attempts, and how fast is it?"

When To Benchmark

Benchmark after checking, not before. The best input is a filtered set of alive candidates from Proxy Checker or a saved pool that was recently checked.

Controls

| Control | Recommendation |

|---|---|

| Rounds | Start with 3. Increase when stability matters more than speed. |

| Concurrency | Keep moderate for large lists to avoid distorting latency. |

| Protocol hint | Use Auto for mixed lists. |

| Request URL | Use a target-specific URL if you need target-specific reliability. |

| Expected status codes | Match your target's real success conditions. |

| Resolve country | Enable if you need location or network type in final selection. |

Benchmark rounds default to 3 and are capped at 10.

Result Fields

| Field | Meaning |

|---|---|

| Pass count | Number of successful rounds. |

| Fail count | Number of failed rounds. |

| Success rate | Passes divided by total runs. |

| Average latency | Mean successful latency. |

| Best latency | Fastest successful round. |

| Worst latency | Slowest successful round. |

| HTTPS support | Whether a successful sample supported HTTPS. |

| Last error | Most recent useful failure detail. |

Details That Matter

| Feature | Detail |

|---|---|

| Rounds | Defaults to 3 rounds and caps at 10 rounds. |

| Checker reuse | Uses checker-style protocol detection, expected status, HTTPS support, location resolution, timeout, and User-Agent controls. |

| Best sample | Chooses the best successful sample by latency when at least one round passes. |

| All-fail memory | Keeps useful failure detail even when every round fails. |

| Stability view | Pass count, fail count, success rate, average latency, best latency, and worst latency make unstable proxies visible. |

| Enrichment reuse | Exit IP and country/location data follow the best successful sample. |

| Pool use | Benchmarking a pool is the fastest way to separate "alive once" from "reliable enough to reuse." |

Picking Winners

For general use, prioritize:

- high success rate

- HTTPS support

- low average latency

- low worst latency

- clean leak-test result

- desired country or network type

The fastest single round is not always the best candidate. A proxy with a slightly slower average and 100% success rate is usually better than a proxy with one fast pass and repeated failures.

Best Next Steps

- Send stable benchmark winners to Test Proxy Leaks.

- Save stable rows to a pool with tags such as

benchmarked,stable, andhttps. - Export final rows only after leak testing if anonymity matters.